Towards optimal experimentation in online systems

The Unofficial Google Data Science Blog

APRIL 23, 2024

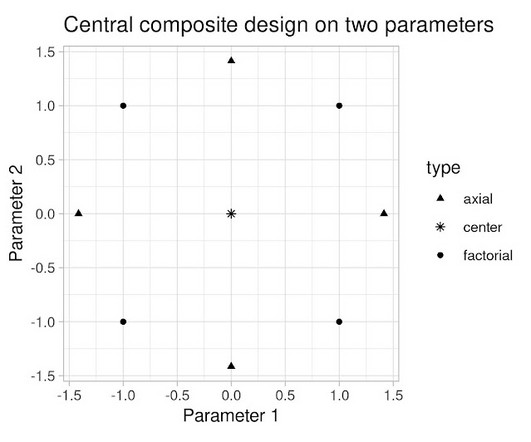

To find optimal values of two parameters experimentally, the obvious strategy would be to experiment with and update them in separate, sequential stages. Our experimentation platform supports this kind of grouped-experiments analysis, which allows us to see rough summaries of our designed experiments without much work.

Let's personalize your content