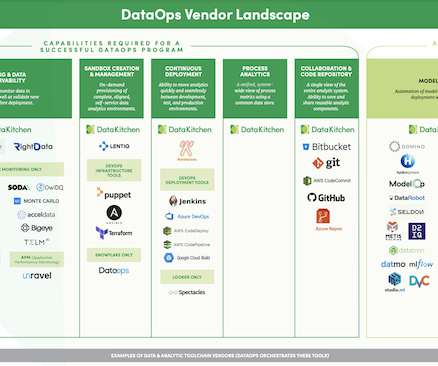

The DataOps Vendor Landscape, 2021

DataKitchen

APRIL 13, 2021

Read the complete blog below for a more detailed description of the vendors and their capabilities. Because it is such a new category, both overly narrow and overly broad definitions of DataOps abound. Airflow — An open-source platform to programmatically author, schedule, and monitor data pipelines.

Let's personalize your content