A Day in the Life of a DataOps Engineer

DataKitchen

OCTOBER 11, 2021

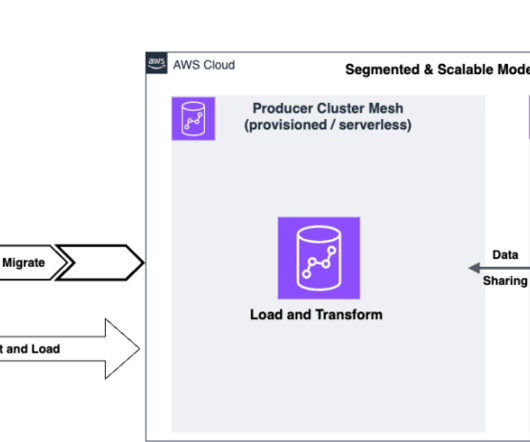

Figure 2: Example data pipeline with DataOps automation. In this project, I automated data extraction from SFTP, the public websites, and the email attachments. The automated orchestration published the data to an AWS S3 Data Lake. All the code, Talend job, and the BI report are version controlled using Git.

Let's personalize your content