Complexity Drives Costs: A Look Inside BYOD and Azure Data Lakes

Jet Global

NOVEMBER 5, 2020

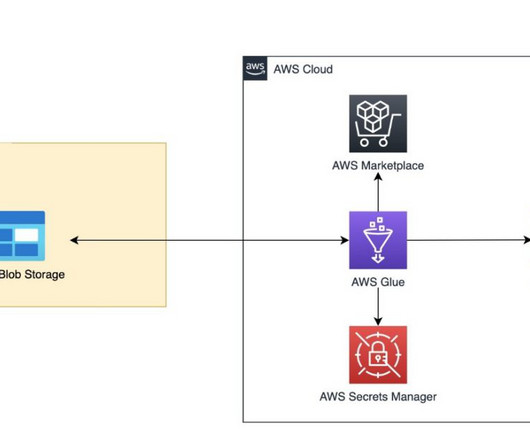

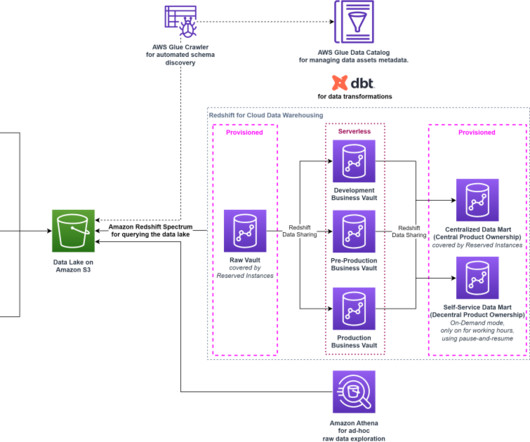

Option 3: Azure Data Lakes. This leads us to Microsoft’s apparent long-term strategy for D365 F&SCM reporting: Azure Data Lakes. Azure Data Lakes are highly complex and designed with a different fundamental purpose in mind than financial and operational reporting.

Let's personalize your content