Ensuring Data Transformation Quality with dbt Core

Wayne Yaddow

MARCH 14, 2025

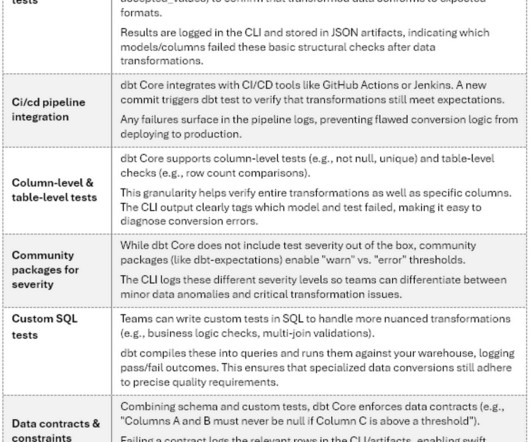

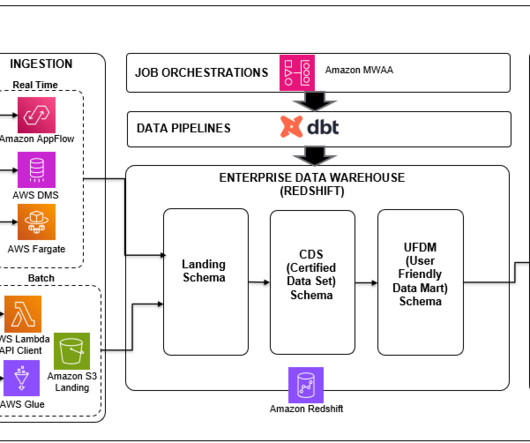

How dbt Core aids data teams test, validate, and monitor complex data transformations and conversions Photo by NASA on Unsplash Introduction dbt Core, an open-source framework for developing, testing, and documenting SQL-based data transformations, has become a must-have tool for modern data teams as the complexity of data pipelines grows.

Let's personalize your content