How EUROGATE established a data mesh architecture using Amazon DataZone

AWS Big Data

JANUARY 15, 2025

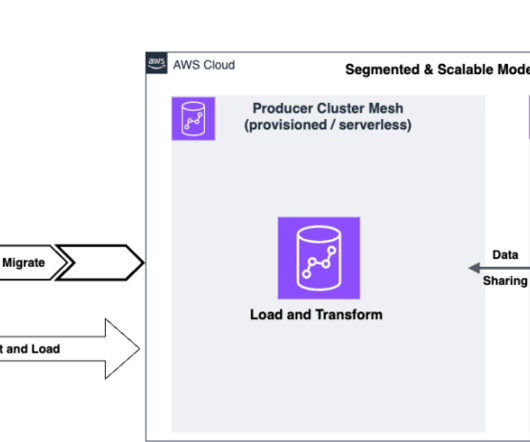

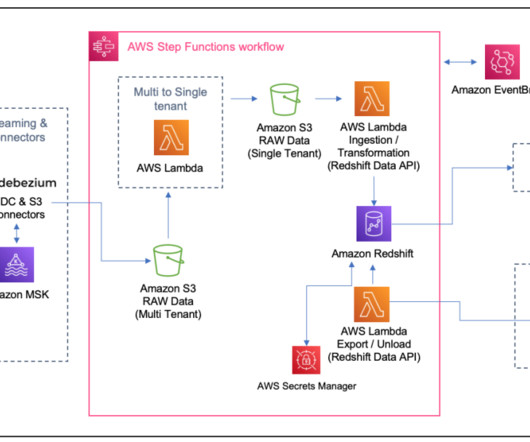

Plug-and-play integration : A seamless, plug-and-play integration between data producers and consumers should facilitate rapid use of new data sets and enable quick proof of concepts, such as in the data science teams. As part of the required data, CHE data is shared using Amazon DataZone.

Let's personalize your content