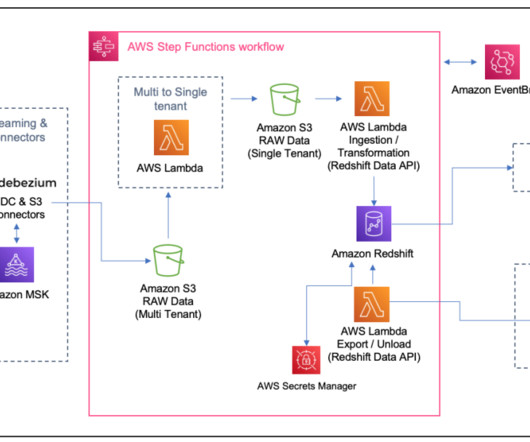

Unlock scalability, cost-efficiency, and faster insights with large-scale data migration to Amazon Redshift

AWS Big Data

AUGUST 1, 2024

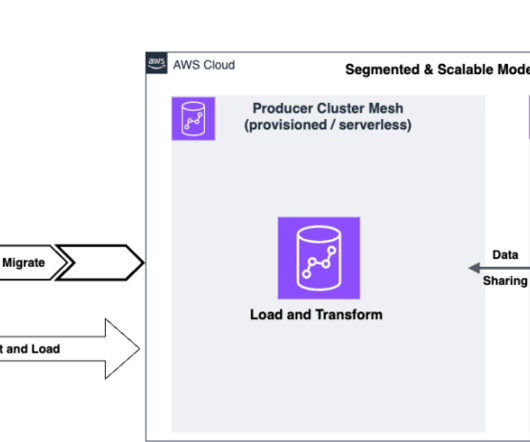

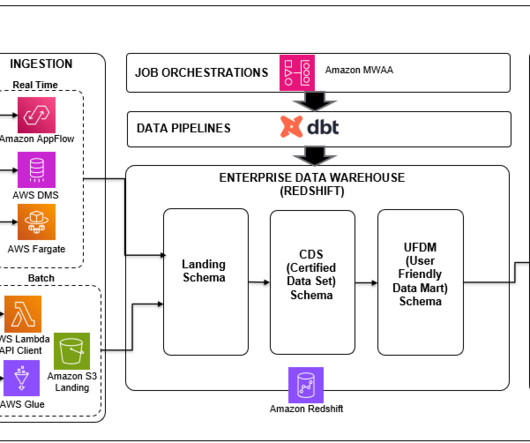

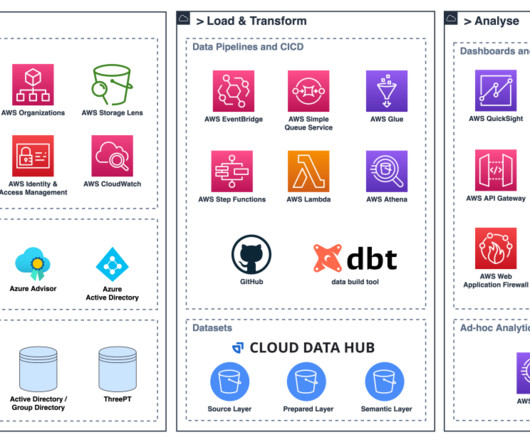

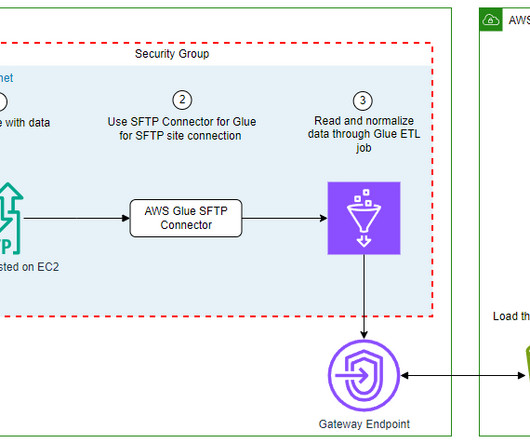

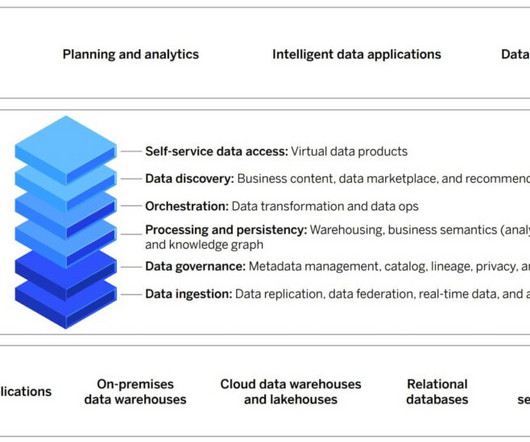

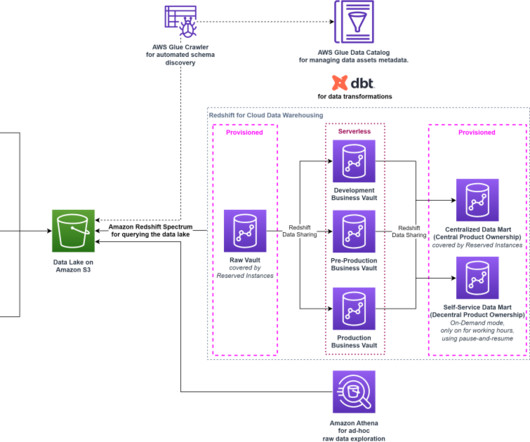

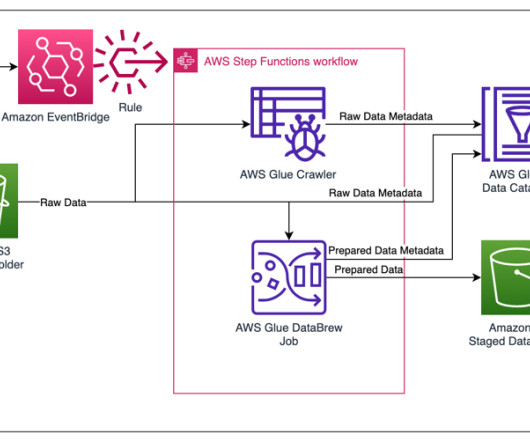

Large-scale data warehouse migration to the cloud is a complex and challenging endeavor that many organizations undertake to modernize their data infrastructure, enhance data management capabilities, and unlock new business opportunities. This makes sure the new data platform can meet current and future business goals.

Let's personalize your content