MLOps and DevOps: Why Data Makes It Different

O'Reilly on Data

OCTOBER 19, 2021

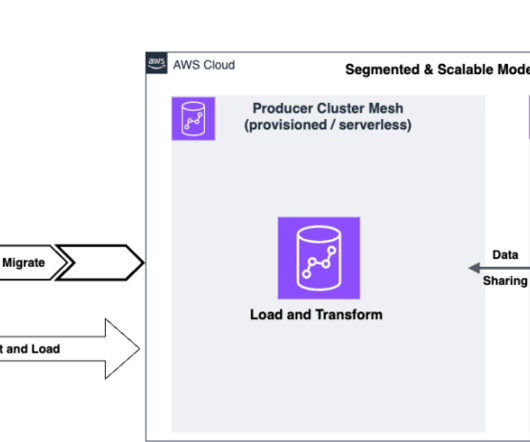

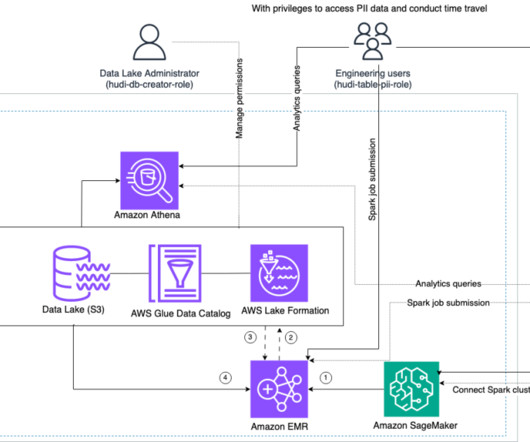

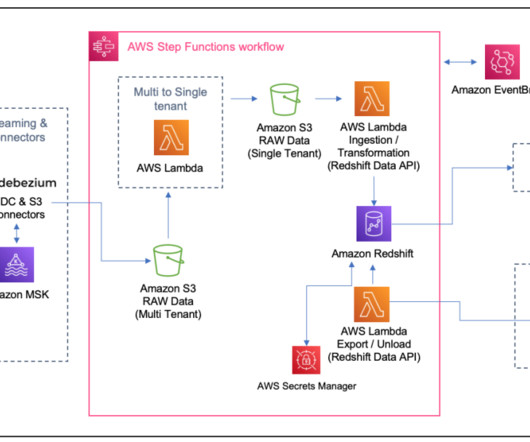

Data is at the core of any ML project, so data infrastructure is a foundational concern. ML use cases rarely dictate the master data management solution, so the ML stack needs to integrate with existing data warehouses. Enter the software development layers. Versioning. Model Development.

Let's personalize your content