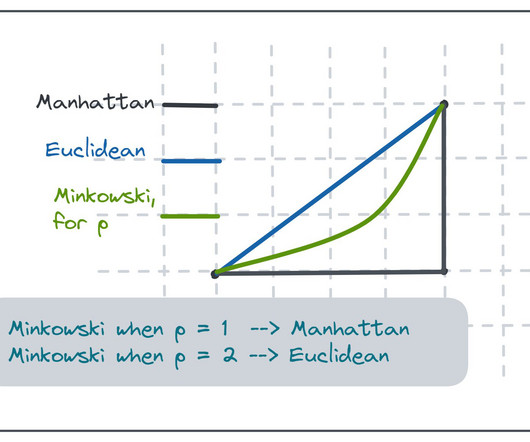

4 Types of Distance Metrics in Machine Learning

Analytics Vidhya

FEBRUARY 24, 2020

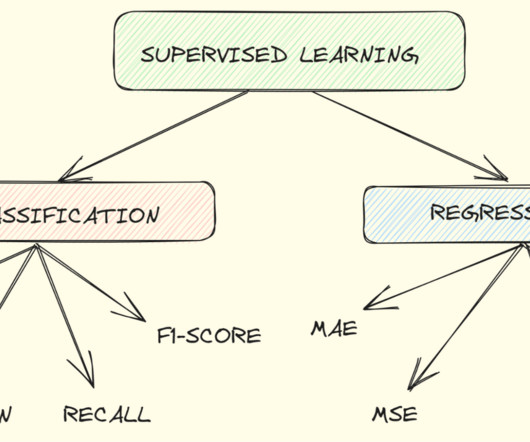

Distance metrics are a key part of several machine learning algorithms. These distance metrics are used in both supervised and unsupervised learning, generally to. The post 4 Types of Distance Metrics in Machine Learning appeared first on Analytics Vidhya.

Let's personalize your content